Apache Hive is a distributed, fault-tolerant data warehouse system built on top of Apache Hadoop that lets you read, write, and manage petabytes of data using SQL. It converts SQL-like queries written in HiveQL into MapReduce or Apache Tez jobs that run across a Hadoop cluster. Hive is best for large-scale batch analytics where data volume is high, query latency of minutes is acceptable, and your team already knows SQL. The current release is Apache Hive 4.2.0 with Apache Iceberg v3 integration, JDK 21 support, and built-in auto-compaction.

Data warehouses built on Hadoop needed a way for analysts to query petabytes of data without writing Java MapReduce code. Apache Hive solved that problem when engineers at Facebook built it in 2008 to enable SQL-based analysis over Facebook's massive internal data lake.

The project was contributed to the Apache Software Foundation, graduated to a top-level project, and has now accumulated 18 years of production optimization across some of the most data-intensive organizations in the world.

This guide covers what Apache Hive is and how it works, its full architecture, how to write HiveQL queries, what changed in Hive 4.0 and 4.2.0, how Hive compares to Spark, Presto, and Impala, when to use Hive versus when not to, and how Hive fits into a modern data lakehouse architecture with Apache Iceberg.

Read: How to Build a Data Warehouse | Data Analytics Software Development | Apache Kafka Guide

What Is Apache Hive?

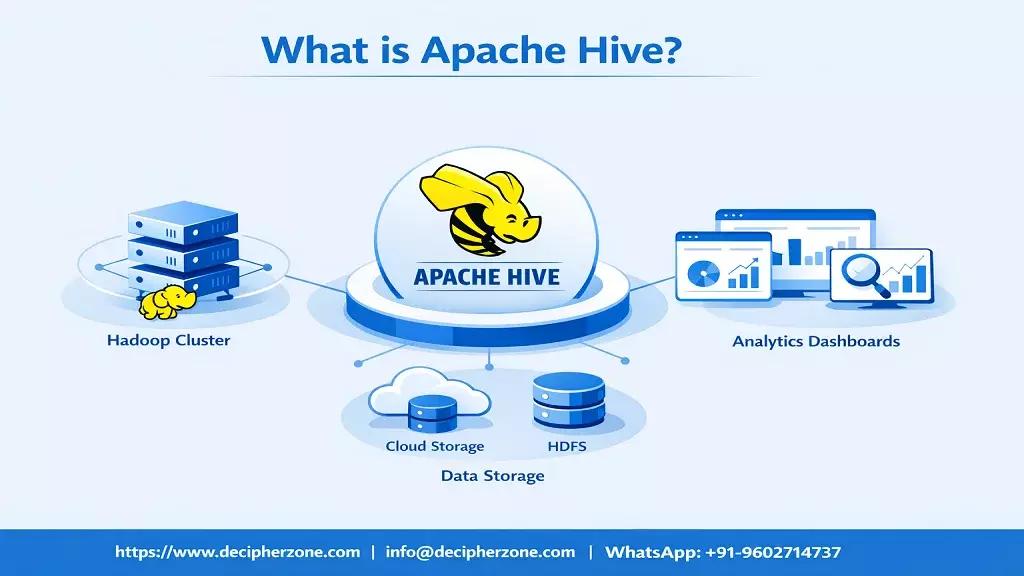

Apache Hive is an open-source data warehouse system designed to process and analyze large datasets stored in distributed storage like HDFS, Amazon S3, or Azure Data Lake Storage.

It provides a query language called HiveQL that closely resembles standard SQL, so analysts and engineers familiar with relational databases can work with petabyte-scale data without learning the underlying distributed computing framework.

Hive works by translating HiveQL queries into execution plans that run on Apache Tez or MapReduce, both of which operate on YARN (Yet Another Resource Negotiator) within the Hadoop ecosystem. The result is a system that can process datasets far too large for any single machine while exposing an interface that any SQL-fluent analyst can use.

The Hive Metastore stores all table schemas, partition information, and metadata separately from the actual data files. This is what makes Hive a "schema on read" system: the data exists as raw files in distributed storage, and the schema is imposed when you query the data rather than when you load it. This approach is far more flexible than traditional relational databases that require schema definition before data ingestion.

Hive was originally created by Joydeep Sen Sarma and Ashish Thusoo at Facebook to handle the company's massive data analysis needs. Today it runs in production at organizations including FINRA (the largest independent securities regulator in the United States), Guardian Insurance (processing data for 27 million members), and hundreds of enterprises using Amazon EMR for managed Hadoop deployments.

How Apache Hive Works: Step by Step

Understanding the execution path of a HiveQL query helps you design better schemas, tune performance, and debug slow queries effectively.

Step 1: Query submission

A user submits a HiveQL query through one of three interfaces: the Beeline command-line client, a JDBC or ODBC application such as Tableau or Power BI, or a programmatic Hive client. All of these connect to HiveServer2, which manages incoming connections.

Step 2: HiveServer2 accepts the query

HiveServer2 receives the query and passes it to the Hive driver. The driver acts as the query controller throughout the execution lifecycle. It calls the compiler to parse and analyze the query.

Step 3: Compiler parses and creates an execution plan

The compiler parses the HiveQL query, checks it against the Metastore for table schemas and column types, and then generates an Abstract Syntax Tree (AST). The optimizer applies cost-based optimization rules to determine the most efficient execution plan. The result is a Directed Acyclic Graph (DAG) of tasks.

Step 4: Execution engine runs the job

The execution engine converts the DAG into actual Tez or MapReduce jobs and submits them to YARN. YARN allocates cluster resources and distributes the work across the Hadoop cluster nodes.

Step 5: Data read from distributed storage

The execution jobs read data directly from HDFS, S3, Azure Data Lake, or other configured storage systems. Hive supports multiple file formats including ORC (Optimized Row Columnar), Parquet, Avro, and plain text. ORC is the recommended format for most workloads because it provides the best combination of compression and query performance.

Step 6: Results returned to the client

Query results flow back through HiveServer2 to the client. For large result sets, the data is typically written to another storage location rather than returned directly, especially in batch ETL contexts.

Apache Hive Architecture: All Components Explained

Apache Hive consists of seven primary components that work together to enable distributed SQL analytics.

HiveServer2 (HS2)

HiveServer2 is the service that accepts and manages client connections. It runs as a composite process combining a Thrift Hive Service for programmatic access and an embedded Jetty web server for a web UI.

HS2 supports multiple concurrent sessions, which makes it suitable for multi-user environments where many analysts query the same cluster simultaneously. It also handles query planning, session management, and authentication.

Hive Metastore (HMS)

The Hive Metastore is the central metadata repository for Apache Hive. It stores all table definitions, column schemas, data types, partition structures, storage locations, and serialization information in a relational database (typically MySQL, PostgreSQL, or Derby).

The Metastore is also used by other tools in the ecosystem: Apache Spark, Impala, Presto, and Trino can all read from the same Hive Metastore, making it the de facto metadata standard for Hadoop-based data lakes. In Hive 4.2.0, a standalone HMS REST Catalog is available for cloud-native deployments.

Hive Driver

The Driver manages the complete lifecycle of a HiveQL statement from receipt through execution and result return. It coordinates the compiler, optimizer, and execution engine, and maintains a session handle for each query.

The Driver is also responsible for hook management, which allows organizations to inject custom logic before and after query execution for purposes like audit logging or access control.

Compiler and Optimizer

The compiler translates HiveQL into an execution plan. Its key stages are semantic analysis (type checking, scope resolution), logical plan generation, and optimization. The cost-based optimizer (CBO), powered by Apache Calcite, analyzes table and column statistics to select the most efficient join ordering, push down filters to reduce data scanned, and determine whether to use broadcast joins for small tables.

In Hive 4.0, the CBO received significant improvements with new rules for anti-join support, branch pruning, and column histogram statistics.

Execution Engine: Tez and MapReduce

Apache Tez is the default execution engine in Hive 3.x and 4.x. Tez represents queries as DAGs that minimize unnecessary data shuffles and intermediate disk writes compared to MapReduce.

For a query that would require three sequential MapReduce jobs, Tez often reduces it to a single job with multiple stages sharing a pipeline, which dramatically reduces end-to-end latency. MapReduce remains available but is not recommended for new deployments.

LLAP (Live Long and Process)

LLAP is Hive's subsecond interactive query layer. It maintains long-running daemon processes on cluster nodes that keep data cached in memory between queries, eliminating the startup overhead of spawning new containers for each query.

With LLAP enabled, Hive can serve BI tool queries with latencies in the range of seconds rather than minutes. LLAP is optional but strongly recommended for use cases where users run interactive queries through tools like Tableau or Apache Superset.

HCatalog and WebHCat

HCatalog provides a table and storage management layer that sits on top of the Hive Metastore. It allows tools like MapReduce and Apache Pig to use the same table definitions as Hive without redefining the schema for each engine.

WebHCat provides a REST API for HCatalog operations, enabling external applications and microservices to interact with Hive metadata programmatically without a JDBC connection.

HiveQL: Writing Queries in Apache Hive

HiveQL is Hive's query language. It follows standard SQL syntax for most operations with some differences related to Hive's distributed nature and the schema-on-read model.

Creating a table

CREATE TABLE sales_data (

transaction_id STRING,

customer_id STRING,

product_id STRING,

sale_amount DOUBLE,

sale_date DATE,

region STRING

)

PARTITIONED BY (year INT, month INT)

STORED AS ORC;

Tables in Hive are stored in HDFS or compatible storage. The PARTITIONED BY clause splits data into separate directories per partition value, which dramatically reduces the data scanned for queries that filter by partition columns. STORED AS ORC specifies the file format, which affects both storage efficiency and query speed.

Loading data into a Hive table

LOAD DATA INPATH '/raw-data/sales/2026/01/'

INTO TABLE sales_data

PARTITION (year=2026, month=1);

Querying with aggregation and partitioning

SELECT

region,

SUM(sale_amount) AS total_revenue,

COUNT(*) AS transaction_count,

AVG(sale_amount) AS avg_order_value

FROM sales_data

WHERE year = 2026

AND month BETWEEN 1 AND 3

GROUP BY region

ORDER BY total_revenue DESC;

The WHERE clause filters on partition columns (year and month) before scanning any data. Hive uses partition pruning to skip every partition that does not match the filter, which can reduce data scanned by 90% or more on well-partitioned tables.

Creating a materialized view

CREATE MATERIALIZED VIEW regional_summary

AS

SELECT

region,

year,

month,

SUM(sale_amount) AS total_revenue

FROM sales_data

GROUP BY region, year, month;

Materialized views, introduced as a production feature in Hive 4.0, pre-compute and cache query results. When Hive's query rewriting detects that a query can be answered from a materialized view, it substitutes the view automatically, reducing query time for common aggregation patterns from minutes to seconds.

HiveQL differences from standard SQL

The most important differences to know: Hive does not support row-level updates or deletes in external tables (ACID tables require ORC format for DML operations). Subqueries are supported but must be in the FROM clause as derived tables rather than correlated subqueries.

The INSERT INTO and INSERT OVERWRITE syntax differs from standard SQL. Window functions follow the SQL 2011 standard. NULL handling follows SQL standard behavior.

Apache Hive 4.0 and 4.2.0: What Changed

Apache Hive 4.0 was released in April 2024 with over 5,000 commits. Apache Hive 4.2.0 followed as the current release, adding further improvements. These releases represent the largest feature addition in Hive's history and position Hive as a first-class participant in the modern lakehouse architecture.

Apache Iceberg integration (the most important new capability)

Hive 4.0 introduced Iceberg table support and Hive 4.2.0 expanded this to Iceberg v3 capabilities including Deletion Vectors, column defaults, Z-ordering, and the Variant type. Apache Iceberg is a modern open table format that adds ACID transactions, time travel queries, schema evolution, and partition evolution to data lake storage without requiring data movement.

With this integration, Hive can now read and write Iceberg tables using standard SQL, which means Hive fits naturally into modern lakehouse architectures alongside Spark, Flink, and Trino.

JDK 21 support

Hive 4.2.0 supports Java 21, the current long-term support release. This brings virtual thread support (through Project Loom), improved garbage collection through ZGC, and pattern matching for switch expressions. For production Hive deployments, JDK 21 delivers measurable improvements in concurrent query throughput.

Docker support

Official Apache Hive Docker images are now available on Docker Hub. This greatly simplifies local development, testing, and cloud-native deployments because teams can spin up a fully configured Hive environment in minutes without setting up a full Hadoop cluster.

Materialized views

Hive 4.0 made materialized views production-ready with automatic query rewriting. When the query optimizer detects that a query's result can be served from a pre-computed materialized view, it substitutes the view automatically. This is the feature that closes the performance gap between Hive and interactive query engines for common BI dashboard patterns.

Built-in auto-compaction

ACID transactions in Hive create delta files that accumulate over time. Without compaction, query performance degrades as the number of small files grows. Hive 4.2.0 introduces built-in auto-compaction that triggers automatically based on configurable thresholds, eliminating the need for manual compaction maintenance scripts.

HMS REST Catalog

The standalone Hive Metastore now exposes a REST Catalog API compatible with Apache Iceberg's REST Catalog specification. This enables tools like Spark, Flink, and Trino to connect to HMS via HTTP rather than requiring a Thrift connection, which simplifies cloud deployments and multi-engine architectures.

Apache Hive vs Spark vs Presto vs Impala

This is the question most teams face when building a new data platform. Each engine has genuine strengths for specific patterns. The right answer is almost always to use more than one engine, with each handling the workloads it is best at.

| Dimension | Apache Hive | Apache Spark | Presto/Trino | Apache Impala |

|---|---|---|---|---|

| Processing model | Batch (Tez DAG) | In-memory, micro-batch, streaming | In-memory distributed SQL | MPP SQL, in-memory |

| Query latency | Minutes (subsecond with LLAP) | Seconds to minutes | Seconds (interactive) | Seconds (interactive) |

| Data volume sweet spot | Petabyte-scale batch | Terabyte to petabyte | Terabyte to petabyte | Terabyte scale |

| SQL compatibility | SQL 2003 and 2011 | Spark SQL (partial) | ANSI SQL (strong) | Impala SQL (strong) |

| Best for | ETL pipelines, scheduled reports, large aggregations | ML pipelines, streaming, complex transformations | Ad hoc interactive queries, cross-source federation | Low-latency BI queries on Hadoop clusters |

| ACID support | Full (ORC tables) | Via Delta Lake | Limited (read only for most) | Limited |

| Metastore integration | Native (is the metastore) | Reads Hive Metastore | Reads Hive Metastore | Reads Hive Metastore |

| Deployment complexity | Medium (Hadoop required) | Medium | Low (standalone) | Medium (requires Hadoop) |

| Concurrency scaling | Good (LLAP) | Good | Excellent | Good |

The practical guidance: use Hive for scheduled ETL jobs, large-scale data transformation, and long-running analytics on petabyte datasets where batch latency is acceptable. Use Presto or Trino for interactive ad hoc queries where analysts expect results in seconds.

Use Spark when you need to combine data processing, machine learning, and streaming in one framework. Use both Hive and Presto on the same cluster, pointing at the same Hive Metastore, for the best of both worlds.

Key Features of Apache Hive

HiveQL: SQL for big data

HiveQL is Hive's query language. It supports standard SQL constructs including SELECT, JOIN, GROUP BY, HAVING, ORDER BY, window functions, subqueries, and common table expressions (CTEs).

The familiarity means data analysts who know SQL can be productive with Hive immediately, without learning Java MapReduce or Python Spark APIs. This was the original insight that made Hive indispensable at Facebook and at every large organization that followed.

Schema on read

Unlike traditional relational databases that enforce schema at write time, Hive applies schema when data is read. This means you can ingest raw data into HDFS or S3 first and define the schema later, which dramatically accelerates data ingestion pipelines. Schema evolution is also simpler because adding a column to a Hive table does not require rewriting existing data files.

ACID transactions

Hive supports full ACID (Atomicity, Consistency, Isolation, Durability) transactions for ORC-format tables. This enables INSERT, UPDATE, and DELETE operations at scale, which were previously not possible in Hadoop-based data systems. ACID support is what allows Hive to serve use cases like slowly changing dimensions and late-arriving data corrections in data warehouse workloads.

Storage format flexibility

Hive supports multiple storage formats. ORC (Optimized Row Columnar) provides the best performance for analytics queries through columnar compression, predicate pushdown, and bloom filters.

Parquet is preferred for cross-engine interoperability with Spark and Presto. Avro suits schema-heavy data with frequent evolution. Text and CSV formats are supported for compatibility with legacy systems but deliver much lower query performance.

Partitioning and bucketing

Partitioning splits table data into separate storage directories based on column values such as year, month, or region. Queries that filter on partition columns can skip entire partitions, reducing data scanned by orders of magnitude.

Bucketing distributes data into a fixed number of files based on a hash of a specified column, which improves the performance of joins and GROUP BY operations on high-cardinality columns.

Security and governance

Hive integrates with Kerberos for authentication, Apache Ranger for fine-grained authorization (column-level, row-level, and masked data access), and Apache Atlas for data lineage and metadata governance. These integrations make Hive suitable for regulated industries where data access control, audit trails, and data lineage documentation are compliance requirements.

When to Use Apache Hive

Apache Hive is the right choice for these scenarios:

Large-scale ETL pipelines

When you need to transform, aggregate, and load terabytes to petabytes of raw data on a regular schedule, Hive's batch processing model is well-matched to the workload. The combination of partitioning, ORC columnar storage, and Tez execution makes Hive efficient for the kind of heavy transformation work that forms the foundation of data warehousing.

SQL-based analytics by non-engineers

Business analysts who know SQL can use Hive directly without learning a new API. This is what makes Hive valuable in organizations where data analysts outnumber data engineers: the SQL familiarity reduces the skill barrier and the volume of custom code that needs to be written and maintained.

Hadoop ecosystem integration

When your data platform is already built on Hadoop with HDFS, YARN, and other Apache ecosystem tools, Hive fits naturally as the SQL layer without requiring architectural change. Many organizations run Hive alongside HBase, Kafka, Spark, and Presto on the same Hadoop cluster sharing the same HDFS storage.

Compliance and regulated data environments

The Ranger integration for column-level access control and Atlas integration for data lineage make Hive appropriate for financial services, healthcare, and government data platforms where regulatory requirements mandate fine-grained access control and audit documentation.

Cost-optimized long-running batch analytics

Hive running on commodity hardware is far cheaper per TB processed than proprietary cloud data warehouses like Snowflake or Redshift for comparable workloads at petabyte scale.

When NOT to Use Apache Hive

Hive is the wrong choice in several common scenarios that competitors often do not discuss clearly:

You need low-latency interactive queries without LLAP

Without LLAP enabled, Hive queries start new execution containers for each query, which introduces startup latency of 10 to 30 seconds even for simple queries. If your use case requires results in under 5 seconds for ad hoc queries, Presto, Trino, or Impala will serve you better.

Your data volumes are small

Hive's distributed execution overhead makes it slower than a single PostgreSQL or DuckDB instance for datasets under several hundred gigabytes. The distributed framework has startup costs that are only worthwhile when the data volume justifies them.

You need transactional OLTP workloads

Hive is an analytical (OLAP) system. It is explicitly not designed for online transaction processing where individual records are inserted, updated, or retrieved with millisecond latency. Hive ACID supports DML operations but they are not comparable to PostgreSQL or MySQL for transactional workloads.

You are building real-time or streaming pipelines

Hive processes data in batches. For streaming data that needs to be processed and queried within seconds of arrival, Apache Kafka combined with Apache Flink or Spark Streaming is the appropriate architecture. Hive can be part of a Lambda or Kappa architecture for the batch layer but should not be in the hot path.

Apache Hive in the Modern Data Lakehouse

The data lakehouse architecture combines the scalability of data lakes with the SQL analytics capabilities and ACID reliability of data warehouses. Apache Hive has evolved to be a natural participant in this architecture through its integration with Apache Iceberg.

In a typical 2026 data lakehouse deployment, raw data lands in object storage (S3, ADLS, or GCS). Apache Iceberg provides the transactional table layer with ACID guarantees, time travel, schema evolution, and partition evolution. Multiple compute engines (Hive for batch ETL, Spark for ML pipelines, Trino for interactive queries) all read and write the same Iceberg tables through the Hive Metastore or an Iceberg REST Catalog.

This multi-engine architecture replaces the older pattern where each compute engine maintained its own table format and storage, which created data duplication and synchronization problems.

Hive's role in this stack is specifically the batch ETL and scheduled reporting layer. It handles the heavy transformation jobs that run on a schedule, then Trino or Impala serves the interactive query layer on top of the same data. The Hive Metastore acts as the shared metadata backbone for all engines.

Read: Data Analytics Software Development | Benefits of Cloud Computing | Apache Beam Using Java

Apache Hive Use Cases by Industry

Financial services

FINRA (the Financial Industry Regulatory Authority) uses Amazon EMR with Apache Hive on an S3 data lake to process up to 90 billion trading events using SQL. Compliance reporting, fraud pattern detection on historical transaction archives, and regulatory filings all run through Hive-based pipelines.

The cloud data lake architecture delivered cost savings of up to $20 million compared to FINRA's previous on-premises solution, and reduced the time needed for recovery and system upgrades.

Hive's SQL interface integrates directly with the compliance analysts' existing tooling and the Ranger integration meets their regulatory access control requirements.

Insurance and healthcare

Guardian Insurance processes data for 27 million members using Amazon EMR with Apache Hive on an S3 data lake. Patient data analytics, claims processing pipelines, and actuarial modeling workloads are well-matched to Hive's batch processing model. The Kerberos and Ranger security stack meets HIPAA data isolation requirements.

Investment management

Vanguard, the largest provider of mutual funds in the United States, uses Amazon EMR with Apache Hive on an S3 data lake. The Hive Metastore contains all metadata about the data and tables in the cluster, enabling 150+ data analysts to run ad hoc SQL queries directly on data stored in S3.

Migrating to the S3 data lake with EMR and Hive reduced EC2 and EMR costs by $600,000 while improving operational efficiency across the entire analyst team.

This case study is typical of large financial institutions where Hive provides SQL access for many analysts over a shared, centrally managed data lake without requiring each analyst to understand the underlying distributed infrastructure.

Retail and e-commerce

Large retailers use Hive for daily inventory reconciliation, customer behavior analytics across years of purchase history, and campaign attribution analysis across billions of clickstream events.

The partitioning capability is essential for these workloads because queries almost always filter by date range, and date-based partitioning reduces data scanned from petabytes to gigabytes.

Technology and media

The original Facebook use case (processing billions of events per day for analytics and recommendation systems) remains representative of how media and ad-tech companies use Hive. Log processing pipelines that collect, transform, and aggregate web events across distributed infrastructure are one of the most common Hive workloads.

Getting Started with Apache Hive

Option 1: Docker (fastest for learning)

With Hive 4.2.0, official Docker images are available on Docker Hub. You can run a single-node Hive setup without configuring Hadoop by pulling the official image and running it with a mounted data directory. This is the recommended approach for learning HiveQL and testing query patterns.

Option 2: Apache Ambari or Cloudera CDP

For production deployments on bare metal or VMs, Apache Ambari and Cloudera CDP provide managed installation and configuration of Hive within a full Hadoop cluster. These frameworks handle the complexity of configuring YARN, HDFS, ZooKeeper, and Hive together.

Option 3: Managed cloud (Amazon EMR, Azure HDInsight, Google Dataproc)

All three major cloud providers offer Hive as part of a managed Hadoop service. Amazon EMR is the most commonly used deployment model for new Hive installations in 2026 because it integrates Hive with S3 for storage and eliminates HDFS management overhead. You pay only for compute time and S3 storage rather than maintaining always-on cluster nodes.

Build Big Data Solutions with Decipher Zone

Decipher Zone Technologies builds custom data engineering and analytics platforms for enterprises across the US, UAE, and Europe. Our team has experience designing Hadoop-based data architectures, implementing Hive-based ETL pipelines, integrating Hive with modern lakehouse tools like Apache Iceberg and Apache Spark, and migrating legacy data warehouse workloads to cloud-managed Hadoop environments.

Contact us to discuss your big data architecture. | Hire dedicated data engineers at $25 to $49 per hour.

Frequently Asked Questions: Apache Hive

What is Apache Hive used for?

Apache Hive is used for large-scale batch data processing and analytics on distributed storage systems. Its most common use cases are ETL pipelines that transform and aggregate petabytes of raw data, scheduled analytical reports over large historical datasets, data warehousing on Hadoop with SQL access for analysts, log processing and clickstream analytics, and compliance reporting in regulated industries. Hive is the SQL layer that makes HDFS and S3-based data lakes accessible to people who know SQL without requiring them to write distributed computing code.

What is the difference between Apache Hive and Apache Hadoop?

Hadoop is the underlying distributed computing framework that provides distributed file storage (HDFS) and resource management (YARN) for running parallel computations across a cluster of commodity hardware. Hive is a data warehouse system built on top of Hadoop. Hive uses Hadoop's storage (HDFS) and resource management (YARN) but adds a SQL interface, a metadata repository (HMS), a query compiler and optimizer, and an execution layer (Tez). The relationship is similar to a relational database and the operating system it runs on: one provides infrastructure, the other provides the data management and query layer.

What is the difference between Apache Hive and Apache Spark?

Hive is optimized for batch SQL queries on large datasets stored in distributed storage. It translates SQL into Tez or MapReduce jobs and is designed for scheduled pipelines, ETL, and large aggregations. Spark is a general-purpose distributed computing engine that supports SQL (Spark SQL), machine learning (MLlib), stream processing (Structured Streaming), and graph processing in one framework with in-memory execution. For pure SQL analytics on large datasets, Hive with LLAP is competitive with Spark SQL. For machine learning, streaming, or complex multi-step processing, Spark is the better choice. Many organizations run Hive and Spark on the same cluster, with both reading from the same Hive Metastore.

What is HiveQL?

HiveQL (also called HQL) is Hive's query language. It follows SQL syntax closely, supporting standard constructs like SELECT, JOIN, GROUP BY, HAVING, ORDER BY, window functions, subqueries, CTEs, and DML operations on ACID tables. The main differences from standard SQL are that Hive uses a schema-on-read model, partitioning and bucketing are first-class constructs, UPDATE and DELETE require ACID-enabled ORC tables, and some advanced SQL features like correlated subqueries have limitations. HiveQL queries are translated into execution plans that run on Tez or MapReduce across the Hadoop cluster.

What is the Hive Metastore?

The Hive Metastore (HMS) is the central metadata repository for Apache Hive. It stores all table schemas, partition definitions, column types, storage locations, and serialization information in a relational database (typically MySQL or PostgreSQL). The Metastore is also used by Spark, Presto, Trino, and Impala, making it the de facto metadata standard for Hadoop-based data platforms. In Hive 4.2.0, HMS exposes a REST Catalog API compatible with the Apache Iceberg REST Catalog specification, enabling cloud-native tools to connect without a Thrift dependency.

What are the major new features in Apache Hive 4.0 and 4.2.0?

Apache Hive 4.0 (released April 2024) introduced Apache Iceberg table integration with branch/tag support and partition-level operations, improved ACID transactions and locking, materialized views with automatic query rewriting, official Docker images for easier deployment, and compiler improvements including HPL/SQL support, anti-join optimization, and better cost-based optimization rules. Apache Hive 4.2.0 extended these with Iceberg v3 capabilities (Deletion Vectors, column defaults, Z-ordering, Variant type), JDK 21 support, built-in auto-compaction, and HMS REST Catalog support. The cumulative change across 4.0 covered over 5,000 commits.

When should I use Apache Hive instead of Presto or Trino?

Use Hive for scheduled batch ETL jobs, long-running aggregations over petabyte datasets, and workloads where query latency of minutes is acceptable. Hive handles heavy writes and complex transformations better than Presto. Use Presto or Trino for interactive ad hoc queries where analysts expect results in seconds, for federated queries that span multiple data sources, and for BI tool connectivity. A common production pattern is to run both Hive and Presto or Trino on the same cluster sharing the same Hive Metastore: Hive handles the batch processing while Presto serves the interactive query layer over the same data.

How does Apache Hive integrate with Apache Iceberg?

Apache Hive 4.0 and 4.2.0 support reading and writing Apache Iceberg tables using standard HiveQL. This means you can run CREATE TABLE AS SELECT or INSERT INTO on Iceberg-formatted tables directly from Hive without any additional tooling. Hive 4.2.0 supports Iceberg v3 features including Deletion Vectors for more efficient row-level deletes, Z-ordering for improved query performance on high-cardinality columns, and the Variant type for semi-structured data. The Iceberg integration allows Hive to participate in modern lakehouse architectures where multiple engines (Spark, Flink, Trino) share the same Iceberg tables through a common catalog.

Author Profile: Mahipal Nehra is the Digital Marketing Manager at Decipher Zone Technologies, specializing in content strategy and tech-driven marketing for software development and digital transformation.

Follow on LinkedIn or explore more at Decipher Zone.